This edition of Platformer is about AI. My fiancé works at Anthropic. See my full ethics disclosure here.

One obvious reason for the public’s rapid turn against AI is the fear that it will someday take their jobs. It’s a fear the AI industry has encouraged them to have: tech CEOs issue regular warnings about AI-related job loss — and it’s already starting to show up in Silicon Valley.

In March alone, tech companies announced nearly 46,000 layoffs — the worst single-month total in more than a year — with a growing number of executives citing AI as a factor in their thinning headcounts. Anthropic’s Economic Index shows the share of work-related AI conversations climbing into nearly every white-collar profession. And a steady drumbeat of research suggests that entry-level work — the rung of the ladder most exposed to LLMs — is showing the earliest signs of disruption.

In one sign of how seriously the tech industry is taking this, Google DeepMind workers in the United Kingdom just voted to unionize.

At the same time, AI has been notoriously difficult to find in the productivity statistics. Amazon says it will hire about the same number of software engineering interns in 2026 as it has in recent years. Openings for software engineering roles are currently the highest they have been in the last three years.

So what gives? Are jobs disappearing, or just transforming? Are workers becoming less essential to their bosses, or more? Are we witnessing the beginning of a massive disruption, or just another hype cycle?

These are the questions we’re setting out to answer in a new mini-series on Platformer. Over the next seven weeks, I’ll be talking with CEOs, operators, and academics watching this transition up close. In each episode, we’ll consider the AI and jobs story in the kind of depth that often isn’t possible in a single news story. And we’ll also bring data: my colleague Ella Markianos will join me at the top of each podcast to review the latest surveys, research and news stories that speak to the intersection of tech and labor.

For our first episode, I wanted to talk to someone I’ve known about as long as I’ve known anyone in Silicon Valley: Aaron Levie, the CEO of Box. Aaron was the first person who explained software-as-a-service to me when I moved here in 2010, drawing diagrams on a whiteboard in the Box office with the kind of patience usually seen in a teacher showing kindergartners how to spell.

Sixteen years later, he remains an enthusiastic explainer of the SaaS world. It helps that Box has a good story to tell — the company’s stock has held up materially better than most of its SaaS peers over the past year, even as a chorus of investors, founders, and posters have warned that traditional enterprise software is about to be eaten by AI agents.

As you’ll hear, Levie is not in that camp. In our conversation, he makes a careful — and at times provocative — case for why he thinks both the “SaaSpocalypse” and the broader narrative of mass AI-driven job loss are wrong. He argues that agents will multiply the number of workers using business software rather than eliminate them; that the “last mile” of human work is far more durable than people assume; and that the engineer of the future is more likely to work at a pharma company than at Meta.

“If you or I go and vibe-code something, we think we’ve replaced the engineer, replaced the accountant, replaced the lawyer,” Levie told me. “But then you actually look — that was the first 80% of the job. The extra 20%, it turns out, is all the value creation of that profession. All the expertise and domain knowledge is in that last 20%, not the text that got generated.”

Highlights of our conversation are below, edited for clarity and length. We also hope you’ll listen to the entire conversation wherever you get your podcasts — just search for Platformer — or watch it on YouTube atyoutube.com/caseynewton.

And let us know what you think — we’re new to podcast production, and welcome your feedback at casey@platformer.news.

Casey Newton: Aaron Levie, welcome to Platformer.

Levie: Hey, good to be here.

Newton: Aaron, you and I first sat down in 2010 —

Levie: We were so young.

Platformer: We were so young. Back then you were sort of early gray, but now you’re just like — normal gray. I think running a public company will probably do that to you.

Levie: The problem is, I’ve been like this for 13 of those years. It would be one thing if this only happened in the past six months, but it’s actually been like this since I was 24.

Newton: Maybe there are more reasons to be gray today, or maybe not — we’ll get into it.

That first time I met you, I have this core memory — because I had truly been in Silicon Valley for what felt like weeks when I came down to the Box office.

Levie: Didn’t you come from, like, the Arizona Star Tribune or something?

Newton: The Arizona Republic. I’d been covering local government. And then one day I said, “What’s going on with computers? That seems interesting.” And now here I am. But I needed people to explain it to me, and that’s where you came in. As I recall, you explained the software-as-a-service business model to me on a whiteboard. So my first question: if you were explaining your business to a reporter like that today, how much of that whiteboard would look the same and how much would be totally different?

Levie: If my recollection serves, a lot of it was trying to compare the on-prem days to cloud, and why cloud was such a big deal. My predictive capabilities were pretty locked in, maybe short of AGI. The whole idea was that software was going to move from your data centers to the internet, and in the process, the real power is that it becomes available to way more companies — businesses of all sizes, lines of business that never could have used software before, end users. This was the phase of consumerization of IT. So that played out.

Now we’re in the next frontier of what software is going to look like. A lot of the core architectural components hold. If you’re running a global supply chain at a Fortune 500 company, you want deterministic systems and software that power your ERP. If you’re at a large B2B like Salesforce, you want a clear set of business logic around how your CRM works, and how your internal workflows around sales automation work. If you’re managing documents for a government agency or a pharma company or a law firm or a large bank, you want to make sure you can secure that data, protect it, govern it, ensure it’s in a safe place and available to the right people. All of that is staying the same.

What has completely changed is the interaction patterns on those systems — where the interaction is coming from. And what you can now do with all that data. The big idea is that in the future, if today maybe 90% of activity on this software is humans interacting with the interfaces, probably three years from now it’ll be 90/10 the other direction. Agents will be interacting with these systems, talking to the data, pulling up data from these tools. And maybe 10% will be you going and browsing and looking through the software yourself.

The interesting thing — and this is going to be the open debate for the industry — is in that 90/10, did the human side go down by 90%, or did we just have a 10x increase, where agents are now leveraging these tools? My argument is more the latter: agents are this explosion of new workers all using these systems, which makes the technologies even more valuable. You have all these new workers on these digital platforms that need data, that need to be secure, that aren’t leaking information in the wrong way. So you still need those guardrails — but now you’ve got a massive multiplier of what people can do with their data, because you have agents that can run in parallel.

Newton: Right, that makes sense to me. There’s this really interesting challenge —

Aaron: By the way — podcast over?

Newton: Yeah. That’s all the time we have. I really want to thank you for joining us. I think we all learned a lot.

No — let’s throw in a few more questions for the super fans, because you just introduced what seems like a possibly profound change in the business model for what you all do. SaaS companies have gotten used to selling by the seat. You have 10 employees, you want 10 of them to be able to use Box, you pay a monthly fee. And it seems like that business model is under a lot of pressure in a world where maybe I don’t have 10 people in those jobs anymore. What I need is a business outcome. So how are you navigating that? Do you think this seat-based business model survives in SaaS?

Levie: You posited the scenario that’s most open for debate, which is: did the people go away? In the math I laid out, the people stayed the same number, but the agents multiplied on top of the platforms. There will be some software categories where the literal seats are not as relevant because you don’t have as many people doing the work. I would actually argue that for a large portion of software categories, that won’t be the case. You’ll have the same number or more people, but you’ll also have 10 times the number of agents as people. So it’s a multiplicative effect of more people — or the same number of people, or maybe a minor reduction — and then vastly more agents.

The part that’s not being priced in by the market is, is that scenario playing out? If I look at our software consumption internally at Box, there aren’t a large number of cases I can make for many of our software products to reduce the number of people that exist as seats. But there are a lot of cases for a lot more agentic use cases on that software.

To take an objective example that’s not Box: if I look at Salesforce, we’re actually going to have more sales reps at the end of this year than we had at the start. That’s more seats within the Salesforce universe. At the same time, I can imagine 10 to 100 more agent use cases on the Salesforce platform than I could have two years ago. Those agents might not be roaming around the interface of Salesforce — they’ll show up inside Claude Cowork or Codex or ChatGPT. The agent will be interacting via a different interface, but the underlying seat that says “Aaron is a user in this platform, with this level of access to this type of data” doesn’t necessarily go away.

We’re already seeing this with our customers: you want a seat for the person because you want some kind of stateful representation of what data that person has, what their entitlements are. But then an agent might do an unbounded level of consumption on the software — where I, as a person, can only click so many things per day, but an agent can do that at 100x the scale. So the seat gives me the ability to use my information across these other agents. But then at some scale, there’s so much data being used that there’s a consumption model on top. This is why I think you’re going to have a stacking business model in software: humans still have seats, but agents will be a consumption pattern on top of that.

Newton: As a CEO, I’m imagining you’re looking at all the SaaS you guys buy to run your business. I imagine you might be happy if you didn’t have to pay for all those seats and could just have agents do it. So when you look at your own spending on SaaS, your feeling is truly, “I’m happy to keep spending for all the seats”?

Levie: There’s a difference between happy and practical. I’d always like our IT spend to be less, but I’m extremely practical about how technology works. The bear case of software is a confusing amalgamation of multiple issues people have — it’s a Rorschach test of “what do you hate about software?” Some people say, “What we’re going to do is vibe-code CRM systems.” Others say, “We’re just not going to have employees, it’ll just be agents.” Others say, “We just don’t need all the features of these SaaS systems, agents will do those.” Some I’m sympathetic to, some I’m not.

The one I’m extremely not sympathetic to: we have no projects internally that I’ve approved to vibe-code a replacement to an existing SaaS service. If I look at the stack of our ERP system, HR system, CRM system, document management system, it would do us no good to spend our time and IT resources trying to replicate functionality that’s already doing its purpose — especially at a moment when I’m about to get 10 times the value from those systems with agents using that data. If I have to both transition a system that’s homegrown and figure out the next set of use cases, you’ll just halt your ability to innovate.

And a minor aside: if you did a word cloud of the past two to three weeks in AI, one of the biggest words would be cybersecurity. Not the Mythos part — the “we leaked customer data, the credentials, the secrets of our system got leaked, we downloaded a package that was exploited.” Think about if the entire economy was trying to rebuild their own version of Salesforce or Workday or an ERP system, and any one of those events happened. Now the entire economy has to halt and do upgrades, or handle the maintenance and ongoing improvement of these technologies. That’s just not very logical economically.

The part I am sympathetic to: there’s some software where, as you use agents more, some of the value proposition goes more into the agentic layer than the software layer. In those cases, you’d compress the value proposition of that software, and at the next renewal you might not spend as much. But conversely — for every scenario where that happens, there’s another scenario where agents add more value on the system you’re using. So the net vendor actually has more leverage in the future. You might save on one part of the stack but end up re-spending it on a different part because of all the upside.

Newton: What we’re really getting at is the skepticism the enterprise software market is facing right now. The reason I wanted to talk to you first is that Box has been facing this kind of skepticism in various ways its whole existence. You had to survive a very early pivot from being a consumer company to an enterprise-focused one. You had to convince people the cloud would be a safe and profitable place to be. And you faced a lot of skepticism about whether Box might just be a feature rather than a company. Now you have AI come along introducing this fresh wave. Maybe the most accelerationist version of that argument is that every company is now a feature, and the only thing that matters is going to be the frontier labs. So to what extent is this SaaSpocalypse story just the latest incarnation of a story that’s never been true, and to what extent is this AI moment truly something different?

Levie: The market is somewhat parsing the different outcomes — not perfectly, but there’s some discerning behavior. If you took Wall Street as one metric and looked at our stock, it’s held up better than most. One of the reasons is that the thing not really under debate is that your most agentic, vibe-coded enterprise future still has to store the data somewhere. You still have to secure and govern the important information of whatever the workflow is. You can vibe-code the creation of the contract, but the contract still has to get stored somewhere, governed somewhere, still has to have a retention policy, access controls.

The part I’m excited by is that becomes meaningfully more important in a world of agents. When I think about the use of data in the enterprise, what all these agents really want to do is access data. They want to read data, write data, know context about your organization — your best practices, your policies, your customer relationships, your research. All of that sits inside your enterprise data, and most of it sits inside unstructured data in the form of business content. So we’re firmly on the side of: bring on all the AI humanly possible, because those agents are all going to be working with enterprise content that still needs to get stored somewhere.

A customer comes to us and says, “We want to automate our entire insurance claim process.” A tremendous amount of enterprise content goes into an insurance claim. When they do that automation — maybe they build the agent on Anthropic, maybe on OpenAI — that agent still needs to talk to all the data in their enterprise. So they have to upgrade their infrastructure.

There’ll be winners and losers in software and SaaS, as has been true of every era of disruption.

Newton: Tell us about some of the losers. You don’t have to name —

Levie: Rather not.

Newton: I know you’d rather not. But basically what you’re saying — and I believe this — is that your business has access to this very rich, valuable data, and that data is not, for the foreseeable future, going to be stored at one of the frontier model companies. So you have a lot of value to create based on that unique advantage. I’m guessing there are other SaaS companies that don’t have that same advantage. As you’re scanning across the market, is there a business where you’re like, “I don’t want to be in that business in a world of agentic AI”?

Levie: I’ll give you a framework, but I’m not going to name names. The factors you want are: Do you have some degree of business logic or workflow in the system, because the agent still needs to do that, even in an agentic world? Do you store data? Are you the natural place for the information to get stored? Do you have a set of domain experience and context that the next training run of the agent doesn’t just replace? Is there an element of security, governance, trust that matters a lot? Are there network effects? This is one reason Slack has been incredibly durable: we’re already communicating in Slack, so agents naturally show up in Slack, as opposed to “I’m going to agentically do Slack.”

There’s probably one more element: how much does the system benefit from a world of multiple agents needing the data, as opposed to one agent needing the data? Because that points to whether the enterprise wants to put all your value into one of the labs, or whether it needs to be a different layer that everything talks to. This is why you still see companies like Databricks and Snowflake growing quite well. You don’t really want to move your data around constantly. You want it abstracted from where the agent is, so you can structure it, secure it, govern it, and then let all the agents talk to it.

So you probably could have a quotient for “how durable is the platform, based on those factors?”

Newton: Right. The Levie formula.

Here’s what I’m taking away: if you have a to-do list app for teams, get out of that business.

Levie: I would say that business is actively pivoting.

Newton: Yeah, I actually think I know one that might be. Box has talked about AI for a long time, but I’m curious for you personally — we do have these momentary enthusiasms in Silicon Valley. I think it’s fair to say both of us had a crypto phase. I’m imagining your AI phase started earlier but took longer to reach fruition. Did you have a moment of conversion where you saw a paper, a product, something where you’re like, “Okay, I need to start taking this really seriously”?

Levie: There have been three moments. About eight years ago, vision models were getting good enough that you could give the vision model a document and it could OCR it, or give it an image of a retail product and it could classify it properly. That was a big deal. But the problem was you had to train individual models for every domain — this was just before the transformer. If you wanted to do document classification in legal, you needed a different model than for financial equity research analysis. So you never had the takeoff moment.

Big deal number two: ChatGPT. We were following GPT-2 and GPT-3, and we had a hackathon where somebody did GPT-2 inside of a document, but it was producing garbled text. It could maybe type ahead five extra words — not going to game-change your productivity. ChatGPT was the first time in this era where it was like, “Okay, this is a very big deal. We’re going to be able to wire up these LLMs and connect to your data.”

The most recent moment, the past year-plus, was marked by Claude Code — but really these more agentic patterns. The LLM runs in a loop, the agent has access to a set of tools — on your computer or in the cloud — and you can hand off long-running tasks. The efficacy has been improving exponentially. This might just be the final form factor of AI: an agent that could run for a minute or a month, has access to any data you need, has access to all the tools you work with, can act as you or as its own entity. It’s just an LLM constantly running in a loop, making decisions, and you intervene to steer or review. This appears to be the architecture of the future of AI.

Newton: I’ve come to believe that basically everyone should have the experience of building a website using an AI agent of their choice. A lot of things clicked for me when I started watching a computer use itself. You recently posted about how, somewhat strangely, AI doesn’t seem to be helping any of us work less. You mentioned that you’ll start working on something with an agent that you think is simple and you lose three hours to it. Was that a real project? Can you share what it was?

Levie: It’s so pedestrian I’m embarrassed to share, but I was going into a city a week later and needed to map out a bunch of customers I should be visiting. I was using Perplexity Computer, which does some pretty good workhorse stuff, and I gave it the task: “Rank-order all the top 50 companies in this region. Get me the LinkedIn of every single CIO of those companies, so I can make sure — okay, who have I connected with?” I didn’t even know what I’d do next, but I wanted to get a good map. That task took maybe 15 minutes — you prompt it once, you get back some data you don’t really like, you re-prompt, it does better, you do it a third time, and boom, you’re off to the races.

So maybe 15 to 30 minutes of AI work. But then the very next thing — I had to do something with this data. I spent the next two hours emailing all the people and filling up my calendar more. It was the kind of task where I thought, “Oh, use AI to accelerate this thing” — and it kind of worked too well, to the point where I had created more work for myself. It would almost have been better if it came back with full hallucinations, because then I could have just gone to bed and been like, “Well, that failed.” But it worked. And by 10 PM I was like, “Well, now I’m going to feel bad if I don’t actually do anything with the tokens I just exhausted.”

It’s a small anecdote, but I think this is happening everywhere. You’re like, “Oh, I’m going to tell AI to write this little web app.” Then you’re like, “Well, I built it. Now I’ve got to add this other feature. I should probably get it hosted somewhere. I might need to change this one thing.” It’s basically If You Give a Mouse a Cookie applied to the economy. You just start building up more and more work.

The part I find really interesting — and this tangentially relates to why I think the job-loss argument is wrong — is that people will find there are way more tasks they could be doing that they just never could do before, because the fixed cost of starting the task was too high. AI made it easy to get going. They lit up the project, did the research, the analysis, reached out to the customer. That kicks off a cycle of downstream work, or a new set of constraints that start to emerge.

To bring this home: the task worked so well that I idly wonder, should I have a full-time person just doing this with an agent? They’d use an agent, but I don’t want to do this every night for the rest of my life between 9 PM and midnight. It might be valuable enough that it’s worth a person to do this for me — in which case, it actually created a job because of my experimentation with this AI I was doing for fun.

Newton: I love that story. When I built my own website, it was absolutely If You Give a Mouse a Cookie, because of course after the website was done, I said, “Well, I need to host it.” And then, “Well, it should probably have a blog.” So I added a blog. Then, “Why isn’t it telling me the current weather in San Francisco? I have to solve this problem for the three annual visitors to this website.” It was super fun. I don’t regret the time. I also didn’t, I think, create a lot of value for myself.

On the flip side, I run what I sometimes think of as a somewhat fake business, in the sense that people pay me to email them. So it’s a very strange, uncomplicated business. I have a bookkeeper and an accountant, and they send me a monthly email letting me know how things are going, but I’ve never really done real financial analysis. And then Claude Cowork shows up, and I can just start chucking spreadsheets into it. For the first time since I started my company five years ago, I’m like, “Tell me about my business.”

Levie: “What’s our revenue?”

Newton: Yeah, completely. It pushed me to make some changes, including, by the way, starting this series of conversations that you were the first person on. So: what advice do you give for people when they’re like, “Okay, I’m bought in, I’m going to try this stuff. But how do I know when I’m just spinning my wheels versus creating something of durable value”?

Levie: This one is hard to answer generically. But this will be a defining question of the next decade. If you have access to abundant intelligence, but it’s not free — it’s abundant but not free — how do you allocate the spend across the organization?

On one hand, it’s easier the higher up in the organization you go, because you have all the data about what the company does well and what doesn’t. On the other hand, it’s somewhat easier lower in the organization where the work is actually happening, because you can self-identify the work that matters. The problem is, both of those have issues: the direct user might think their work is the most important for the tokens, and the person high up might not know about the new innovation somebody has.

The world was a little easier with scarce intelligence, because everything was kind of slow, and it had to be slow. Now with abundant intelligence, you could have everybody running around spinning up agents doing lots of work — maybe 70% of which is not valuable. But you don’t know which is the 30/70 until you’ve done the whole set of things.

Newton: Is one potential solution to just put up a leaderboard of who has burned the most tokens in a given month and reward them somehow?

Levie: (jokingly) That is emerging as the best practice. The alternative is just token-maxing, and you’re good. But token-maxing aside — there’s a tool set I’m sure 10 startups are working on right now. They’ll see this and they’ll all pitch us, but it’ll be a good business for a few of them. There’s a new kind of ERP, HR, finance system that lets you have a heat map of where the tokens are going, and the rough value allocation of what that produced. Right now it’s a comical idea — “Oh, you’re going to have to treat tokens like headcount.” But we’re only going to be able to apply compute rationally to the most important areas of the business. Right now it might be 1% of your company spend, but if in three years it’s 10% of the spend, we don’t joke around with where 10% of our labor force is going. The same will be true of your tokens.

Newton: Let’s talk about jobs. You recently posted about a kind of Gell-Mann amnesia for AI. Gell-Mann amnesia, of course, is where you read about something you know a lot about and notice the obvious errors, but assume that same source is credible on other topics. You wrote: “People use AI for their job and see all the various things they have to do in the last mile, but then look at someone else’s job and think that AI will eliminate it immediately.” Why are some people so quick to think AI can automate away a whole job?

Levie: This is amazing technology — the coolest technology I’ve ever played with in my life. So it’s deceptively cool. You’re like, “Oh my God, I think I just did my taxes,” or “Oh my God, I just built this amazing marketing website in five minutes.” Then we look at the output and we’re like, “Gosh, that must totally replace the job of XYZ profession.”

There are a few core flaws with that. What is that profession doing for all the hours in their day? How much of it is just doing the final calculation of your taxes, versus getting all your data in order, reviewing all the work, asking you questions back and forth, knowing the right questions to ask, dealing with the three missing things you didn’t even remember to add — but if an AI system had done it, it would have totally glossed over? That’s what the profession does. The automation of one or two or five of the steps are just individual tasks.

In the case of development, you or I can tell Claude Code, “Generate me the XYZ product.” And we could be like, “Wow, that must automate the engineer out of existence.” Well, the code quality is probably horrendous. The ability to ask it to do 40 other things over a 12-month period is going to stack in complexity. The moment you actually want to get that software hosted, make sure there’s no downtime, ensure you have a good distributed system — that’s already 100 times more complex than just prompting the code to get written. The moment there’s a security event, somebody has to wake up and respond. I can name 30 other things a developer has to do.

A lot of people say the job of the engineer was never to write code, it was to do X. But no — they were writing code most of the time in the prior world of work. The problem is they were highly constrained by how much code they could write in a day, and they were automatically bottlenecked from doing the other things their job could be. So what is the future engineer? It’s to understand what you’re trying to build, to make sure it gets built properly, to ensure there are no security issues, to ensure it gets released, to ensure it’s high quality.

If you or I go and vibe-code something, we think we’ve replaced the engineer, replaced the accountant, replaced the lawyer. But then you actually look — that was the first 80% of the job. The extra 20%, it turns out, is all the value creation of that profession. All the expertise and domain knowledge is in that last 20%, not the text that got generated.

And the converse: I’ll use AI for analysis of a market I’m thinking about. If I just took the output and ran with it, I know it would not work, because I know it’s missing context — either I didn’t give it, or I know something else about a different trend. But somebody else might see that and say, “Wow, Aaron’s job is incredibly easy, AI just gave me the answer of what he’s going to go do.” And I’m like, “No, my job is way harder, I promise.”

There’s a different axis people need to think about. If you took today’s static work, maybe it would be: you’ll get the first 90%, then we’re going to automate the next 9%, then the next 0.9%. But there’s a dynamic part of the equation. The market is starting to ask more from the provider, because they now know what’s possible. So just as you automated the first 90%, the market shifts on you, and that 90% is now the new 50%. The demands of what you ask an engineer to do go up tenfold, because you’re like, “I think you can do that thing way faster now, so I’m going to give you a much bigger project.” You have this dynamic system: our needs and demands are growing as a result of what we can automate.

Newton: I love the idea that AI will let us reenvision what our jobs could be in a more expansive, creative vision. My fear is that the last mile of human supervision will turn out to be kind of boring. We’re already starting to see this in some jobs — there’s a piece that’s automated, and my job is now just to review AI output, and that is just pure drudgery. Does that complicate the picture you just painted?

Levie: It adds a wrinkle. The big question is: are there jobs in the future? The answer is yes. Now the question is, do we want those jobs of reviewing the output of AI agents? Maybe we all just opt out of the economy because we don’t want that job. So interesting philosophical question of what’s the new way to get fulfillment and creativity out of these jobs. You see burnout of engineers on X, basically saying, “All my job is is to review slop from the AI.” There’s a limit to how fun that is.

But engineering is a unique job compared to the rest of the economy. Most of an engineer’s day is to think about a problem, think about a system, write code — and that code is text. You’re just writing a lot of text, and somebody else reviews the text and you ship it. So if the agent did the writing and reviewing, then all you’re really doing is reviewing the text and shipping it.

But go talk to an investment banker, or a lawyer above paralegal, or a doctor or a nurse, or a pharma researcher — they would love to get out of the toil. They’d say, “I don’t want to spend 15 hours generating a corporate pitch deck for this client — that is just me moving images around on a PowerPoint, doing some Google searches to find market trends, and pasting that in. I’d love to automate that. The job I should be doing is getting in front of my clients and making sure we’re delivering unique value and insights to them.”

That’s why I’m not overly worried about this. We’re seeing some hyper-accelerated dynamics in engineering that don’t always relate to other forms of work. If you talk to a doctor and ask, “How much do you enjoy typing up the patient notes after the patient meeting?” — they want to automate the heck out of that. They want to be done with that part of the job. Mostly it’ll be a net positive change as people are able to get rid of the stuff they hate doing, and the demands of the job evolve in a way that makes it much more exciting.

Newton: It’s the sort of thing I love to hear. I would love to not live through a massive disruption where we see super high unemployment. I also can’t help but note we saw almost 46,000 tech layoffs announced in March alone, with AI sometimes cited as a potential cause. So would you put a number to it? On the software engineering front, do you think in three years we have about as many software engineers as we have today, or more? Or do you think there’s a bigger shift?

Levie: I think we’re going to have more. I don’t want to be unsympathetic to people who really will face these changes. But big picture: if you were a CS grad of the past two decades from a top 25-to-50 CS school, by and large you were trying to go to a tech company — in Silicon Valley or a couple other places. So most of the software talent in the world of this cohort ended up building software for consumers. We were building ad apps, ride-sharing, enterprise software (thank God). We’ve accumulated a lot of engineers on that kind of work, and some of those companies have over-hired.

Who’s the loser of that equation? Every other company on the planet, because they couldn’t compete with Google and Facebook and Microsoft for that top engineer. They couldn’t automate things in the life sciences process, or the supply chain, or automotive AI systems. I don’t know how much software you’ve used from companies that aren’t in the Valley, but if you log into your bank and you’re happy, you’re a totally rare person. If you look at most car console designs of any car that’s not from two companies, you can imagine how unusable these systems are. That correlates to the fact that those companies couldn’t overstaff with all the top engineers and designers.

Now what happens? All of a sudden, what was maybe a 30- or 50-engineer problem previously, Claude Code and Codex come in, and now it’s a 5- or 10-engineer problem. For the first time ever, those companies are able to take on work that wasn’t possible before. They can bring automation to all the systems and workflows they couldn’t have afforded or justified.

So in some cases of tech, you’ll see a temporary dislocation. At the exact same time, the thing you should be tracking is the number of engineering jobs opening up at traditionally non-Silicon Valley tech companies — small businesses, consulting firms, life sciences, manufacturing.

And as a force multiplier, you’re going to have a number of new types of engineering jobs where the job is entirely about how to deploy agents inside the firm to automate work. I did this for fun just to make sure I wasn’t full of shit. If you go to the Eli Lilly careers page, as one does, they have this job title called “lab automation software adviser.” That person is an engineer whose job it is to bring automation through AI to the lab process.

Think about how many hundreds of thousands or millions of jobs will look like that in the future. My job is to take the innovation coming from AI-land and apply it to this particular business process in my organization. You’re kind of like an FDE — a forward-deployed engineer — but for that company. Those will be the people who would have gone to Meta or Google five years ago. They’re going to now work in pharma, banking, manufacturing. And those are actually incredibly stimulating jobs. You’re not just building an app, you’re automating drug discovery.

Newton: So you’re saying even in the future, even just with my small newsletter business, I might one day be able to fulfill my lifelong dream — which is to compete directly with Palantir and just build a vast surveillance and analysis program?

Levie: If you so choose, you can. What’s the fastest-growing role at something like Anthropic or OpenAI? It’s these FDE roles.

Newton: Forward-deployed engineers.

Levie: Yeah, you need humans to go implement this stuff inside the organization. Those are the engineers of the future, if you’re not just building software that is an application.

There’s a funny article out of the FT — I don’t know how much I trust their views on technology — that lawyers are being inundated by clients asking them questions because they went to AI, and the lawyers have to verify the advice is good, or review the contract that got written. What we’re doing is lowering the barrier for everybody to participate in these things in a touristy way. I can be a tourist in software development, in legal, in healthcare. But that eventually still needs to get verified, or the work actually has to get done that last mile, and that eventually moves into needing some kind of semi-expert.

This is why I don’t think we yet know what degree you should go into in college — I don’t think any of the degrees are off the table. You should totally go into CS if you’re really excited about software development. You just shouldn’t expect to go build a little app that you press a button on. You should expect that you’re going to use CS skills to go do clinical trial automation at a pharma company.

Newton: I think that’s a great place to land, because it leaves me with a feeling I have so rarely when thinking about the tech-enabled future lately, which is optimism. So thank you, Aaron, for giving us a jolt of that. It’d be great to check in with you again in a year and see if the picture still is as rosy. Aaron, thanks so much for joining us.

Levie: Thanks, man.

A MESSAGE FROM OUR SPONSOR

Become an AI-native team with Rovo

Atlassian Rovo is AI that knows your projects, code, and people so it can bring context (and guardrails) to every workflow.

And because Rovo lives where your teams already work, it doesn’t just find the answers — it helps you do the work.

See how Datasite is becoming an AI-native team with Rovo.

Following

Google fights an AI-generated zero-day

What happened: Google says it found an AI-generated zero-day exploit that could have triggered a “mass exploitation event.”

Google found “prominent cyber crime threat actors” were planning to conduct a “mass vulnerability exploitation operation.” The exploit would have enabled users to bypass two-factor authentication on a popular open-source tool.

Google’s Threat Intelligence Group says it tipped off the affected software maker and worked with them to prevent the attack.

The company said it is confident that a Python script the attackers created was AI-generated — because of its characteristic “textbook” AI coding style, and also because, despite the code working, there were some hallucinations (lol).

Google said AI is increasingly helpful for discovering vulnerabilities of the type used in this exploit — which was previously done via manual review from human experts. Unlike traditional software, AI can sniff out code that has high-level logical flaws. AI can find types of errors that “appear functionally correct to traditional scanners but are strategically broken from a security perspective.”

Why we’re following: The exploit Google found is confirmation of a growing threat: AI can find and exploit vulnerabilities that previously only humans could.

What’s more, hackers are starting to experiment with going beyond just discovering vulnerabilities, to actually using AI to orchestrate cyberattacks in real time.

The company’s research has found actors associated with China and North Korea doing “sophisticated” experimentation with AI, it said. They’ve also seen actors experimenting with orchestrating attacks autonomously and using OpenClaw to refine attacks.

What people are saying: On X, John Hultquist, Google Threat Intelligence’s chief analyst, wrote, “I think most of us are surprised we have not found more evidence” of bad actors using AI to discover exploits. “We believe this is the tip of the iceberg. Other AI-developed 0days are probably out there.”

He added, “If criminals are doing it, then state actors with significant resources probably are too.”

OpenAI needed “big computer”

What happened: Sam Altman appeared in court today for the trial where Elon Musk is seeking to remove Altman and co-founder Greg Brockman from their jobs at OpenAI.

The NYT’s Mike Isaac writes about the contrast between Altman and Musk’s testimony: while “Musk openly sparred with opposing counsel,” Altman “has taken a different tack. His answers have been terse, quiet and noncombative.”

Musk’s lawyer Steven Molo questioned Altman about several executives and board members who said under oath that Altman had lied to them.

At one point, Molo asked “are you completely trustworthy?” Altman replied, “I believe so.”

In possibly our favorite detail so far, Altman described an evening meeting at Tesla, during a time in which Elon Musk was trying to fold OpenAI into his car company. The meeting apparently contained “a long, long period of time with Elon showing us memes on his phone.”

Elsewhere at trial, Microsoft CEO Satya Nadella testified that Elon Musk never told him about concerns that Microsoft’s investment in OpenAI violated OpenAI’s charitable commitments. (Musk is suing Microsoft in addition to OpenAI).

OpenAI co-founder Ilya Sutskever said in court that his stake in OpenAI is now worth about $7 billion. He gave judge Yvonne Gonzalez Rogers a memorable explanation of the difference between the AI of OpenAI’s early days and now: “I would describe it as the difference between an ant and a cat.”

Sutskever told Judge Gonzalez Rogers why OpenAI needed outside investment after Musk’s departure. “If there is no funding, there is no big computer,” Sutskever said. “You don’t need the biggest computer, but you need a big enough computer.” And, well, “If you don’t have a big enough computer, it was not going to work.”

Why we’re following: Platformer would like to start a petition to replace all mention of “compute” with “big computer” from now on.

The evidence that Musk was trying to fold OpenAI into his company isn’t great for his case that Altman and Brockman “stole a charity.” Although he apparently could have managed his time better while he was doing it! But in fairness to Musk, we cannot ourselves plead innocent to repeatedly showing coworkers memes on our phones.

What people are saying: On X, programmer and investor Paul Graham, who chose Altman as his successor at startup incubator Y Combinator, wrote: “One of the things Musk vs Altman shows is how much more promising AI is than anyone expected.” Graham added, “Sam could have started it as a for-profit company. His life would be much simpler now if he had. But he didn’t realize in 2015 that AI would warrant more than you can raise in donations.”

—Ella Markianos

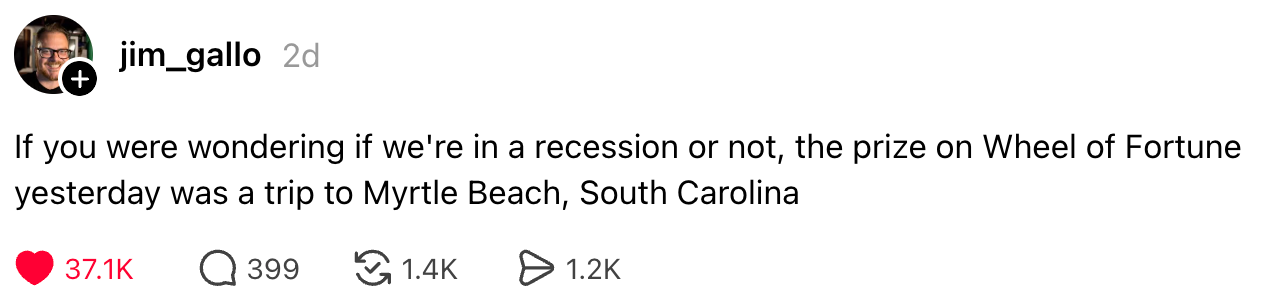

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and job automation arguments: casey@platformer.news. Read our ethics policy here.